6 Months In (Almost)

I thought the (almost) 6 month mark would be a good place to do a little recap on what I have been up to since starting back at Tesco as a Software Developer! I won’t go into loads of detail on the code, partly because I am not sure I am allowed to, but also because I don’t want to make this an extremely long post with lots of confusing code in it.

Month 1

In the first month at Tesco, we were temporarily placed in the Identity team to work on a project. Just before we started, they had an issue where one of the SSL Certificates expire, leading to availability issues with one of the websites.

An SSL certificate is digital certificate of authentication for a website, which enables an encrypted connection. When browsing the web, if you notice a padlock in the URL bar, that means the website has an SSL Certificate and is secure. Generally the website will also start with https rather than http when it has an SSL Certificate, the s standing for Secure (makes sense)! More info on SSL Certs and https can be found by following the links.

To get an SSL Certificate you have to purchase it from an approved provider. Clicking the padlock in your browser will show the certificate and give information on who the provider is. The problem Tesco had was that one of their certificates had expired, and the warning to let us know it was happening was sent to someone who no longer worked here. If your certificate expires then the webpage becomes inaccessible as the security can no longer be guaranteed. Therefore myself and the other 3 Tesco Makers apprentices were given the task of creating something to track all the SSL Certificates expiry dates, and give a warning to the team when any were due for renewal. We had 5 weeks to do this, and could use any language or tools we wanted.

As most of us were used to working with it, we picked Java to write the backend code. For the frontend, I decided to use Springboot and React, as I had for GitClub, as it was really the only frontend language I knew!

The main component of the project was our Java class which was able to retrieve SSL certificates for URLs and extract the expiry dates. Once the date was retrieved we saved it into a database along with the URL and some other information.

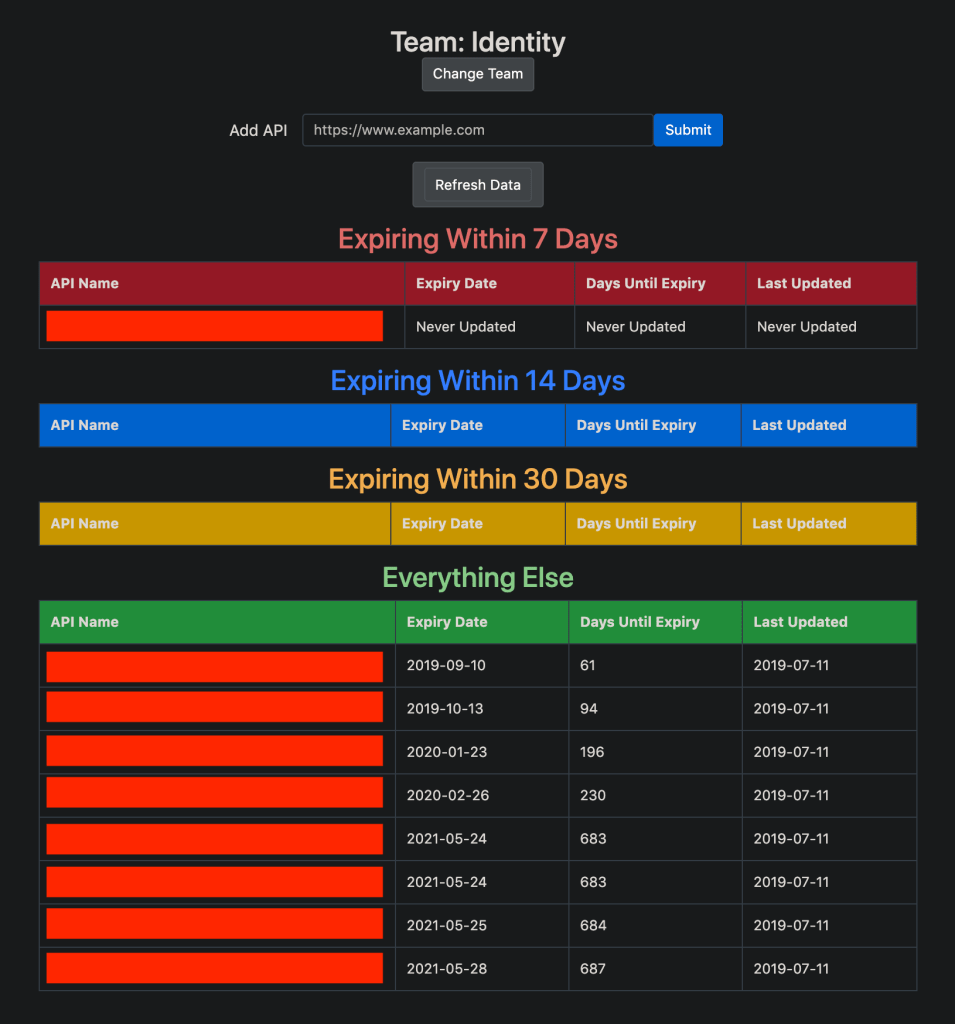

Using React I created a front end that would then display the information from the database, sorted by date, with different tables showing different groupings of time until expiry.

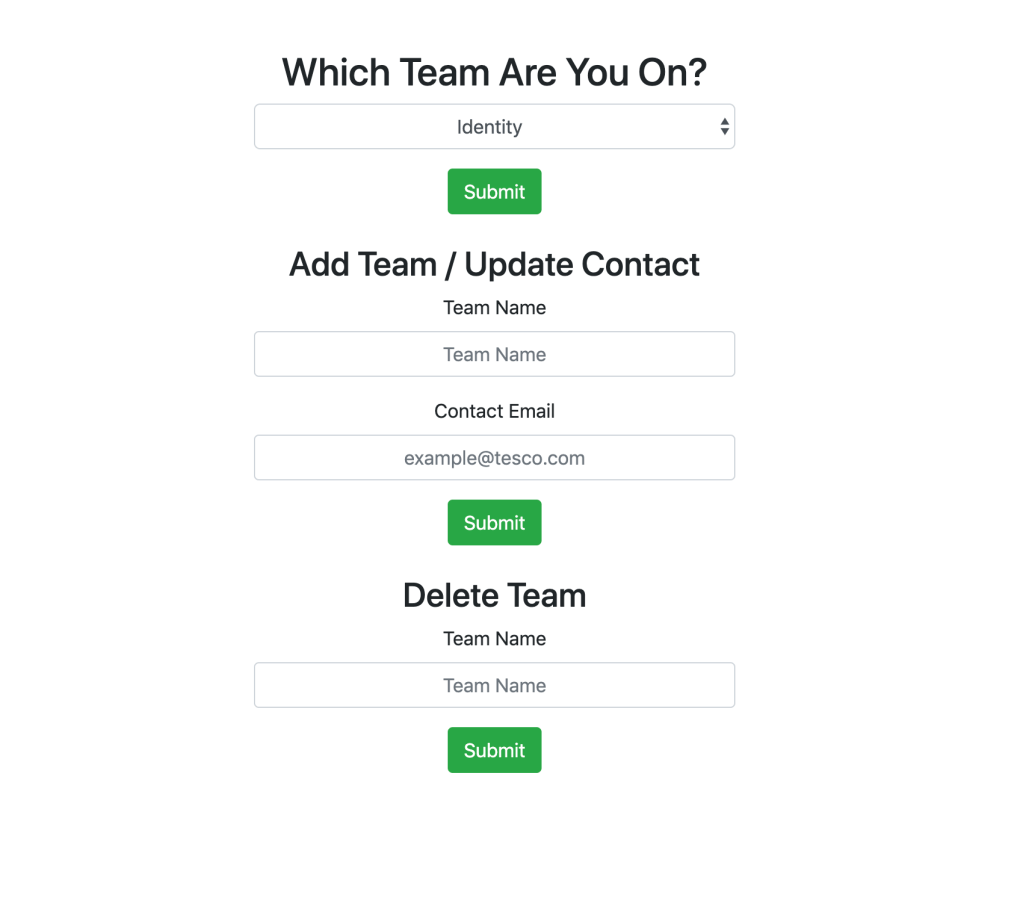

We also added the ability to add different URLs by team, and then only view the information for your team. Unlike in GitClub, I tried not to break how React usually works and stuck to only using one hook. This meant instead I used some logic to change what was shown on the page. The form to select team is actually at the same address as the page with the tables, I just set a variable flag to switch what was rendered.

Selecting your team changes the view to this:

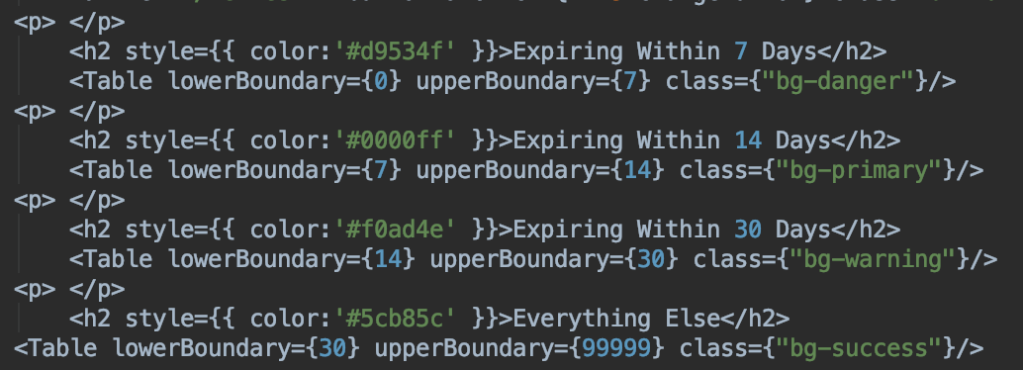

Another attempt at keeping the codebase smaller, I made the tables re-usable. Originally I had written 4 tables, then sorted the data into them based on the dates. I refined the code afterwards by creating my own table class that could accept parameters instead.

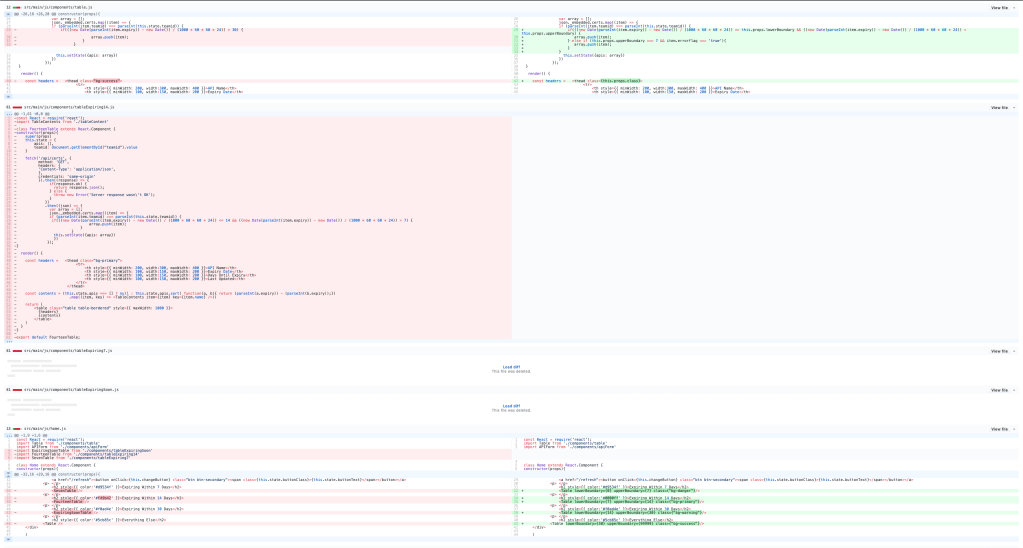

This refactor really reduced the size of code, as shown in the below diff on Github. I had to zoom way out to show all the code I was able to delete, so it is tough to read!

Once the code was written we deployed it to a virtual machine running on the Tesco Cloud. As we had used Springboot, I had also exposed an API with our data on which allowed us to access it using Runscope, a tool that calls APIs and runs checks on the responses. This would allow us to set up the alerts if an expiry date was coming up.

Month 2-3

For the next couple of months myself and the other Makers were on a placement on the Tesco Labs team. Tesco Labs is the branch of Tesco Technology who get to play with new tech and innovate for the future.

For the first couple of weeks we were attending workshops in order to learn a bit more about the Tesco end to end process of developing a project. This included design work and coming up with ideas, two things we hadn’t really had much chance to work on. The workshops were really useful and I still find it useful to think back to them now.

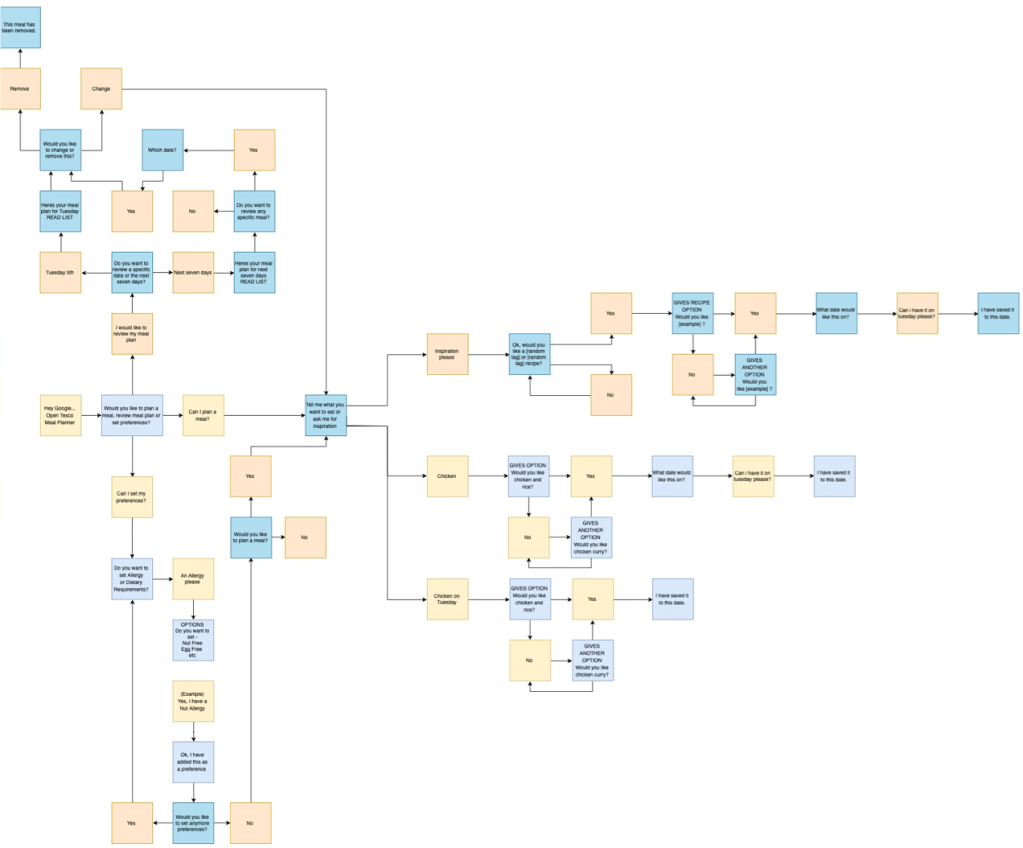

During the first two weeks we developed the idea we wanted to work on. Our idea was the Tesco Meal Planner, a voice controlled assistant which would help you plan meals, using data from the Tesco Real Food website. This is the final flow of conversation for the project. The text isn’t really readable, but it shows the amount of options we added in.

The idea of the project is you would talk to the assistant and ask it for a certain food on a certain date. It would search the Tesco Real Food site and give you options. You could then save them for that date, and retrieve a list of your meals at a later time. Users could also save preferences such as dietary requirements and allergies to filter out meals. If you didn’t know what you wanted, you could ask for inspiration, which would find you a random meal, still keeping your preferences is mind.

Originally we wanted to use a recipe API which we were assured existed, but upon trying to use it, it turned out it was a dummy API with no data.

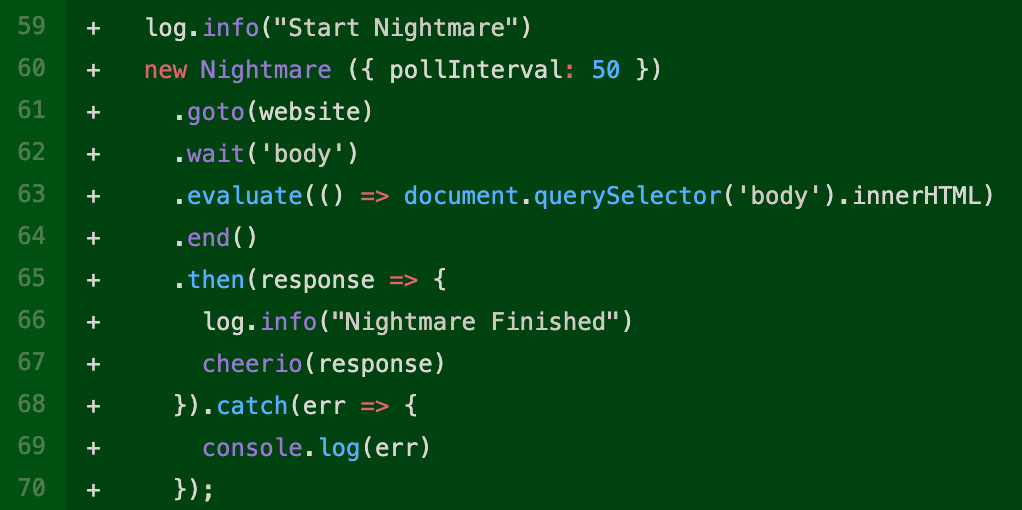

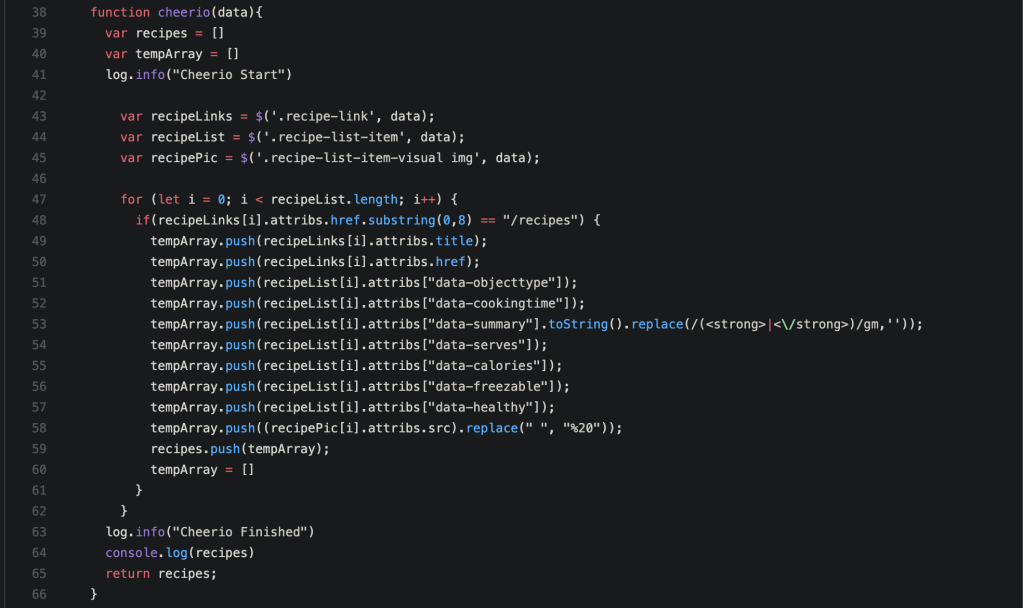

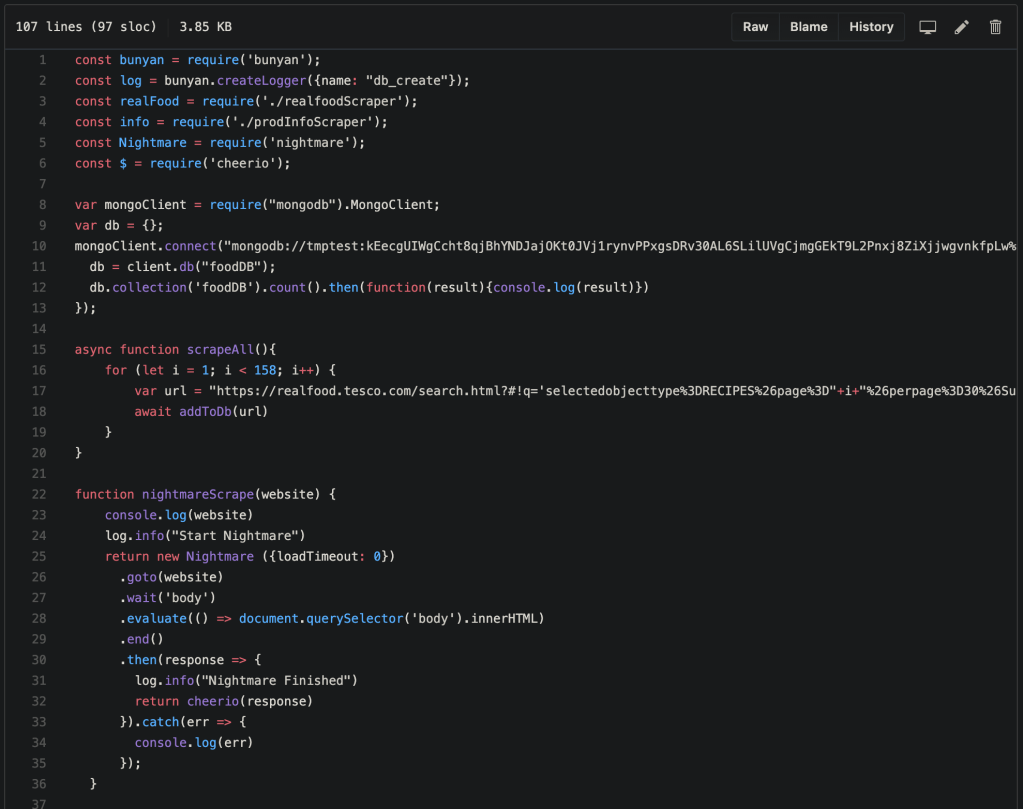

Because of this, I learned how to scrape webpages! Scraping basically means going to the webpage and retrieving all of the data off of it. You can do a quick, basic HTML scrape which grabs all the visible information as soon as the page loads, or you can do a JavaScript enabled scrape which allows you to change and load elements on the page before retrieving the data. The data can then be sorted through, I used a program called cheerio to do this, allowing you to only get the information you want.

Originally I did a live scrape, using the search criteria from the user, loading the page, then scraping the data. This was pretty slow however and could be unreliable, so I changed it up, and scraped all the data into a database.

Here was the original live JS scraper:

Here is the replacement, scraping all the info and adding it into the database:

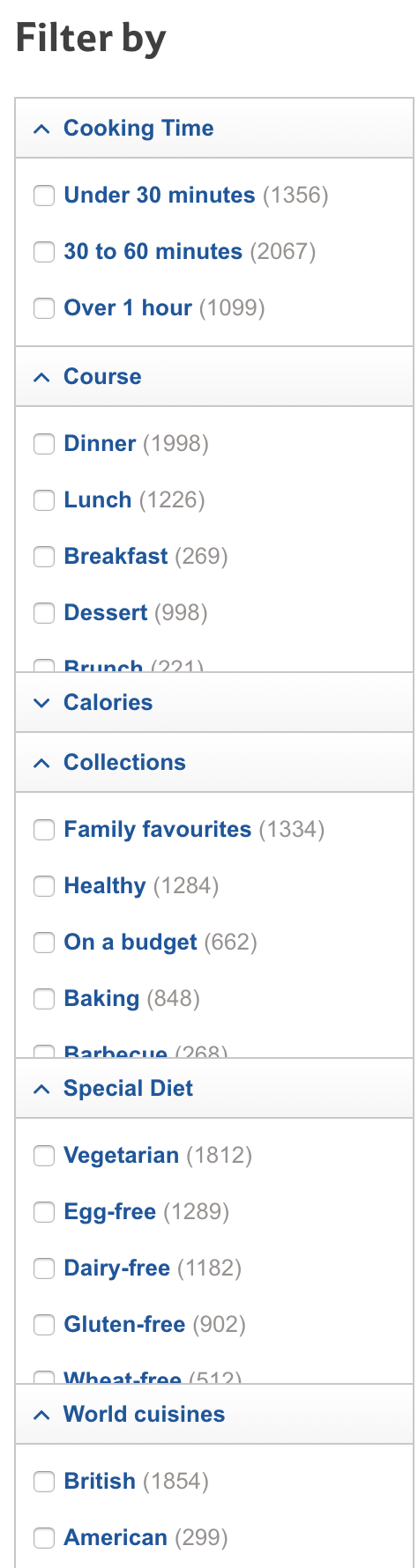

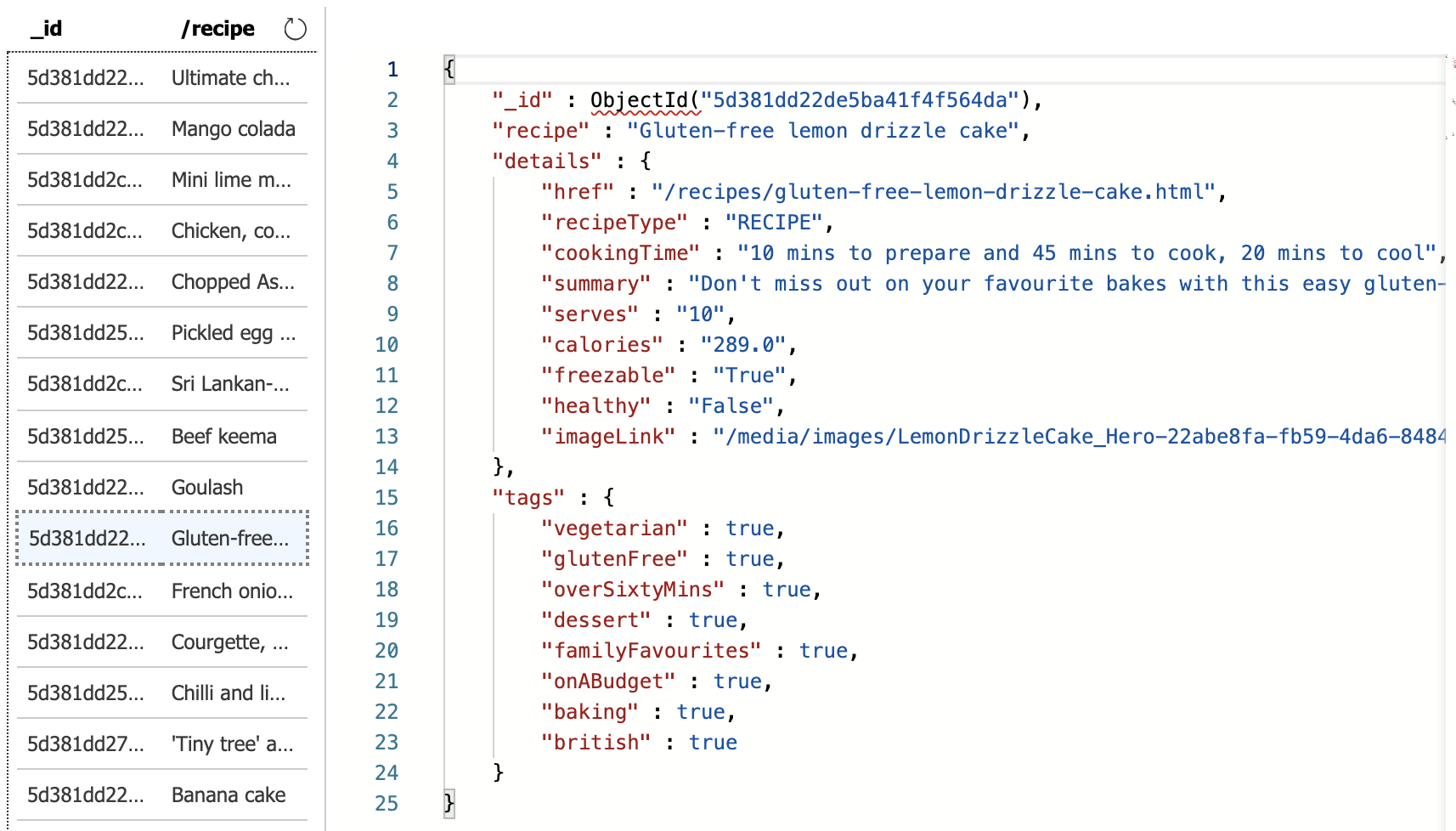

We saved it into an Azure Cosmos Mongo Document Database, as our application was deployed on Azure. I then added an index so the database would be searchable. By using the JS scraper, I was able to select specific tags while scraping, which is how I got allergy information. By ticking a box on the website, I knew the displayed results would sit in that category, so I added that category to the document for each recipe displayed. Below is the list of options available on the website, along with how that translated into the database. If a recipe didn’t appear on the page when an option was ticked, that tag simply doesn’t appear for it on the database.

Scraping the webpages was a really fun challenge and was a good learning experience for me.

The code for the application itself was written in JavaScript and hosted on an Azure App Service. We then connected that app service as a webhook on Dialogflow, Google’s tool for developing voice assistant programmes.

There was a big learning curve using JavaScript properly as a backend language for the first time. The main issue we faced was how JS would run the code asynchronously, we needed certain information returned before we responded to the user, so we had to learn about Promises and other callback methods in NodeJS, as well as await and then.

Once we had this figured out the code wasn’t too difficult. The end result was a useful application, and we were all very happy with how it turned out. I personally used it to plan my dinner a few times!

Below is a video demo of most of the features, featuring the voice of Sean.

At the end of the project we had to present it back to the Labs team, and some stakeholders from around the business.

As everything in this project was written by myself and the other Makers, there actually isn’t any sensitive information involved, so I can share the Github repo for anyone interested in viewing the code.

Here is the link to the copy of the repo.

Month 4-6

For the last couple of months I have been on the Tesco Quote Team. I am not sure how much I am actually allowed to talk about regarding what I have been doing, but I will say it has been a great experience. I have been able to learn so much since I joined the team and actually started working in a proper technology environment.

I have worked on support, fixing bugs and tickets that came in. I have implemented features in the code base. I have written tests for existing classes, and used TDD to write my own. I set up an international promotion which went live in all stores in England and Scotland (and worked without any issues!). I have used a pipeline to deploy my code, following the CI/CD process. I have learned so much about so many different technologies and systems, which has almost been overwhelming at times, but the knowledge gained is invaluable. The way Tesco operates is also fairly unique as we are able to manage our own architecture, which exposes me to a much greater variety of work.

The team also recently held a Hackathon to improve some of the problems we face, which was really interesting, and opened my eyes to a variety of problems and also ways of fixing them!

I didn’t have any ideas for this one as I only found out about it the day before, due to being on holiday, but I am now prepared with some good ideas for the next one!

I have had a great time so far, and I am really looking forward to learning even more and developing further as a developer. I am also really looking forward to a time where I can work happily in the codebase on my own, without being worried about breaking everything!

Song of the … half year?

I picked a song I’ve listened to the most in the last month, according to spotify.me, which is a cool website that links to your Spotify account and gives you insight to some of your music listening habits. I particularly liked this bit.

And of course…